We watched a good product die slowly.

Our team had delivered a validated concept for a client: a Figma prototype, a video demonstrating value, strong research backing the direction. The work was solid. The client was engaged. Then our engagement ended, and the project hit the gap. The client needed board funding, a build team, and a development roadmap. None of those are unreasonable steps. But each one is a place where momentum stalls. And without a clear milestone pulling things forward, it did.

Nobody killed that project. There was no meeting where someone said "we're not doing this." It just stopped getting talked about. That's how most innovation projects end, not with a decision, but with silence.

This is a structural problem, not a people problem. The distance between "validated concept" and "thing that exists in the world" is long. Every handoff leaks momentum: design to development, development to compliance, compliance to executive approval. Each layer has its own timeline, its own stakeholders, its own capacity to slow things down. In regulated industries, add legal review, testing protocols, and audit-ready documentation to that chain.

The quality of the idea isn't enough. The process between validation and reality is where good products go to die.

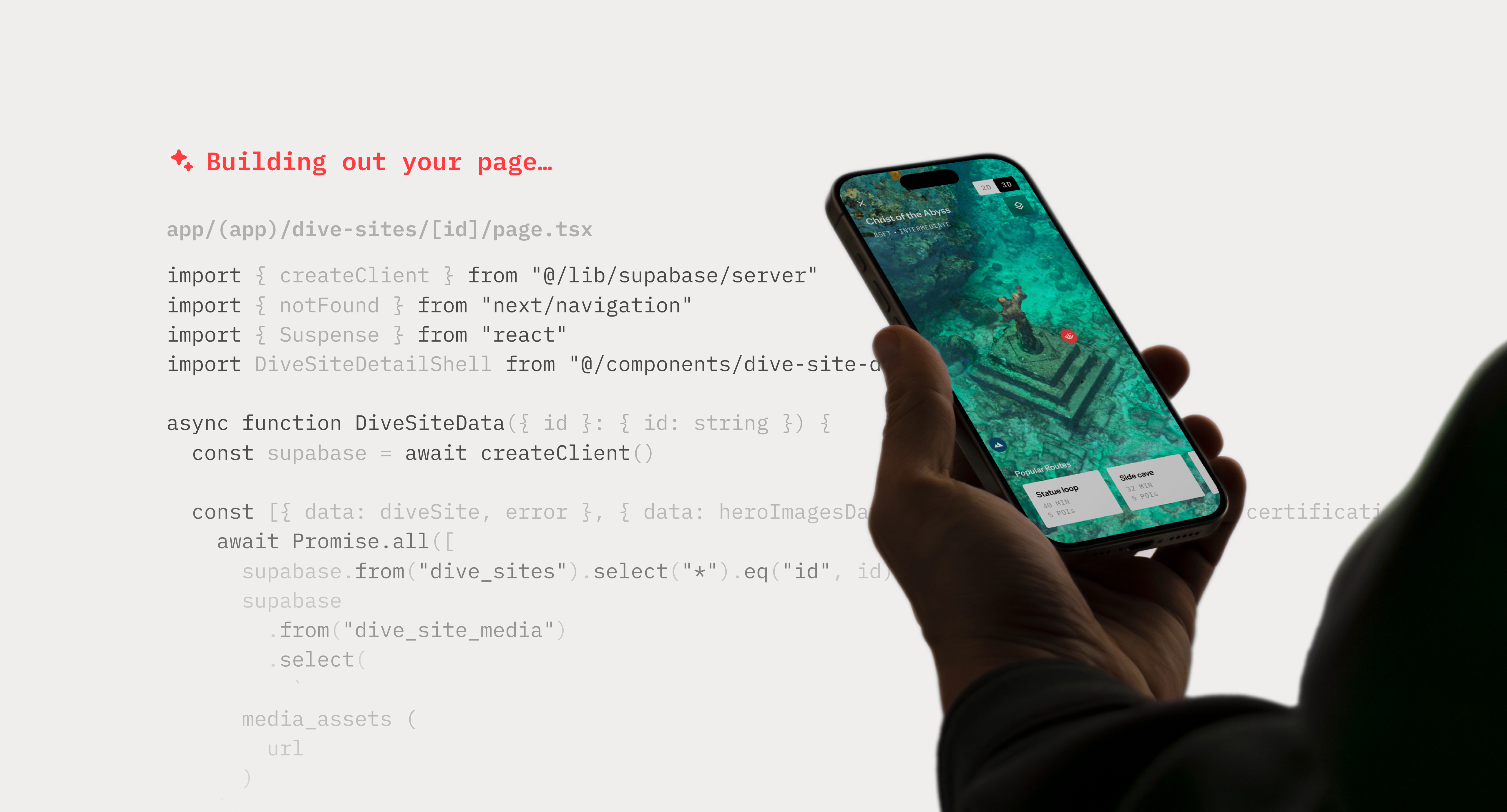

AI-assisted prototyping, what the industry calls vibe coding, extends what the design process can produce. We describe a product or feature idea in natural language and generative AI writes the code to build it. That works two ways in practice: quick prototypes during early exploration to test directions fast, and polished functional builds directly from existing Figma designs. No separate engineering team. No handoff. Something real, in days.

Two things change at once: speed and fidelity. The timeline from sketches to something people can actually use compresses from weeks to days. Additionally, the prototype isn't a simulation anymore. It's a working app. Every button works. Data persists to a database. Complex interactions that are impossible to fake in Figma, like rotating a 3D model, or filtering live data, are seamless. For someone used to evaluating Figma prototypes, the first reaction is usually "wait, this actually works?"

AI-assisted prototyping doesn't just move faster. It changes the outcome at every stage where projects typically stall. The handoff chain that kills most innovation projects doesn't disappear: research to design, design to engineering, engineering to executive approval. But arriving at each stage with something real, built in days instead of months, means momentum is already established before the institutional machinery kicks in. Here's what that looks like in practice.

Better ideas, faster

Traditional co-creation is a translation exercise. We saw the friction during a project engagement when we conducted a co-creation workshop: a participant describes an idea, a designer interprets and sketches it, then someone digitizes it afterward. Each translation step takes time and introduces drift. The idea that gets built is never quite the idea that was described. And because the designer is the bottleneck, the pace of the session is limited by how fast they can put pen to paper, not how fast participants can think.

AI-assisted prototyping removes the translator. Participants describe what they want to the AI and see a functional version being built directly. The iteration is immediate: "what if this button did something different," "what if the data was organized this way." The session stops stalling at the translation layer and starts moving at the speed of ideas.

Better feedback, earlier

The feedback you get from research is only as good as what you put in front of people.

With a Figma prototype, users critique the demo. They flag broken interactions, missing screens, moments where the illusion breaks down. That's useful for catching usability problems, but it's not feedback about the product. It's feedback about the prototype. The session is implicitly about whether the demo works, not whether the idea does.

AI-assisted prototyping shifts that entirely. When something is fully functional, users stop troubleshooting and start reacting. "This would be really helpful in the field." "I wish it could also do X." They're evaluating the idea on its own terms, not navigating around its limitations.

The nature of the feedback changes too. A scripted prototype leads users through a predetermined path, which means the feedback you get is about the path you already designed. A functional build lets users explore freely. They go off script. They try things you didn't anticipate. That unscripted behavior is often where the most valuable signal lives: the workflow you hadn't considered, the use case you didn't know existed.

Less friction at the engineering handoff

Most development timelines don't blow up in engineering. They blow up at the moment engineering receives the handoff and has to start asking questions. How does this component behave? What happens when the data doesn't load? What's the intended state for this edge case?

AI-assisted prototyping means those questions get answered during the design phase, not after. APIs are already researched. Data models are tested against real conditions. Architecture decisions are established and observable, not implied by a Figma annotation. What remains for the engineering team are production concerns such as security, scalability, compliance. That's a tighter scope, a faster start, and one less place for momentum to leak.

From pitch to proof

For the stakeholders who ultimately decide whether a project lives or dies, the difference is simple. A Figma prototype still needs an engineering team, a timeline, and a budget ask. Comparing to something built through AI-assisted prototyping that people have already used, that has usage data behind it, that engineers can actually open and build from, that's a different kind of ask entirely. One is a vision. The other is evidence of a vision that already works.

That's what closes the gap. Not faster design. Not cheaper engineering. A process that generates real evidence before the institutional machinery requires it.

If the tools are this accessible, why not just do it yourself? It’s a fair question. A founder can prompt an AI and get something that technically works.

The short answer is that accessibility lowers the cost and speed of building, but it doesn’t improve the quality of decisions. And in product development, bad decisions are what create most of the risk. Building something that runs is not the same as creating something people can actually use, trust, and depend on.

Take a clinician-facing product. A vibe-coded version might surface all the right data, vitals, trends, alerts, historical records, but everything carries equal weight. Dense tables, generic charts, no clear prioritization. To understand what’s happening, the clinician has to stop and read. That’s the failure mode. Not missing functionality, but missing clarity. A designed version approaches the same information differently. What needs to be seen first, in seconds, under pressure? What signals urgency? What can stay in the background? Critical values surface immediately, trends are readable at a glance, and supporting data is accessible without competing for attention. The interface does the triaging, so the clinician doesn’t have to. That’s not visual polish. That’s usability under real conditions. The same gap shows up in interaction. A vibe-coded product might load data with no feedback, pause, then update. Technically correct, but disorienting. A designed system introduces loading states, preserves context, and communicates what’s happening so the user stays oriented. Small decisions, but they’re the difference between something that works and something people trust.

This is what design expertise produces. Not a visual style, but judgment. Knowing what to prioritize, what to simplify, and how people behave under pressure. And that judgment matters most before anything is built.

And that judgment compounds when it meets domain expertise. The founders and stakeholders who tend to produce the best outcomes aren't trying to do both. They bring what only they can: deep knowledge of the problem, passion for solving it, and conviction about what success looks like in their world. Design translates that into something usable, coherent, and built to last. Neither works as well without the other. The combination is what closes the gap between vision and something real.

That project we watched die didn't have to end that way. The concept was solid. The client believed in it. What it needed was something real to carry it through the gap — evidence a board could react to, a foundation engineers could build from, something users had already touched and responded to.

We're a design studio that has always worked at the intersection of design, technology, and strategy. AI-assisted prototyping is the latest addition to that practice, and the most significant one in years. If you have a concept stuck in a pipeline, or a vision that needs to become tangible, the path from idea to something real has never been shorter. Let's build it together!